Processing Giant Excel Files in Laravel Without Errors: Safe & Efficient Way

Hello, Laravel developers!

Have you ever been in a situation where you had to import data from a fairly large Excel file? Maybe user data, product data, or sales reports reaching 1000, 2000, or even more rows.

You try the simplest way: read the file, then do a foreach loop for each row. When executed… BOOM!

Fatal error: Allowed memory size of 134217728 bytes exhausted (tried to allocate 20480 bytes)Or maybe this?

Maximum execution time of 60 seconds exceededFrustrating, right? These errors are nightmares for every developer handling large file processing. But don’t worry, Laravel has an elegant and efficient solution for this problem.

In this tutorial, we will learn how to process Excel files with thousands of rows of data safely, without consuming a lot of memory, and without making users wait long. We will use Laravel’s flagship package: Maatwebsite Laravel Excel.

Table of Contents

- Why the ‘Usual’ Way Fails?

- Step 1: Preparation and Installation

- Step 2: Creating a Data Import Scenario

- Step 3: Creating the Import Class

- Step 4: Implementing Chunking

- Step 5: Improving Reliability with Batching

- Step 6: Integrating in Controller

- Bonus: The Best Solution - Process in Background with Queue

- Conclusion

Why the ‘Usual’ Way Fails?

Before we get to the solution, let’s understand the root of the problem first.

- Memory Exhaustion (Running Out of Memory): The standard way to read Excel files is to load the entire file contents into RAM at once. Imagine, one row of data in Excel could take up 10KB of memory. With 2000 rows, you’ve already needed 20MB just to store data in memory, not to mention other processes. This is what causes the

Allowed memory size exhaustederror. - Script Timeout (Execution Time Exceeded): PHP has a maximum time limit for script execution (usually 30-60 seconds). If the loop process for 2000 rows takes more than that, the script will stop and produce a

Maximum execution time exceedederror.

The solution is not to increase the memory limit or execution time (that only covers up the problem, not solving it), but to change the way we process files. The concept is simple: Don’t eat everything at once, chew little by little. This concept is called Chunking.

Step 1: Preparation and Installation

Make sure you already have a Laravel project. If not, create a new one with composer create-project laravel/laravel project-name.

We will install the Laravel Excel package. Open the terminal in your project directory and run the command:

composer require maatwebsite/excelThis package will be automatically registered in Laravel. For older Laravel versions, you may need to add ServiceProvider and alias in config/app.php, but for modern Laravel, this is no longer necessary.

Step 2: Creating a Data Import Scenario

To make this tutorial real, we will create a scenario for importing Product data from an Excel file.

1. Create Model and Migration for Product:

php artisan make:model Product -mOpen the newly created migration file in database/migrations/ and adjust the structure.

// database/migrations/xxxx_xx_xx_xxxxxx_create_products_table.php

public function up()

{

Schema::create('products', function (Blueprint $table) {

$table->id();

$table->string('name');

$table->string('category');

$table->integer('price');

$table->timestamps();

});

}Don’t forget to run the migration:

php artisan migrateStep 3: Creating the Import Class

The Laravel Excel package has an artisan command to create an import class. This is the most structured way.

php artisan make:import ProductsImport --model=ProductThis will create the file app/Imports/ProductsImport.php. Let’s open it and see its contents.

By default, this class has a model() method that will create an instance of Product for each row. However, for better flexibility (such as validation or custom logic), we will use the collection() method.

Change the ProductsImport.php file to this:

<?php

namespace App\Imports;

use App\Models\Product;

use Illuminate\Support\Collection;

use Maatwebsite\Excel\Concerns\ToCollection;

class ProductsImport implements ToCollection

{

/**

* @param Collection $collection

*/

public function collection(Collection $rows)

{

foreach ($rows as $row)

{

// Assume the first row is header, so we skip

if ($row[0] === 'Name') {

continue;

}

Product::create([

'name' => $row[0], // Column A

'category' => $row[1], // Column B

'price' => $row[2], // Column C

]);

}

}

}Explanation:

ToCollection: This interface gives us access to Laravel’sCollectionfor each row (or chunk) being processed.$row[0],$row[1],$row[2]: This is access to columns in Excel.$row[0]is column A,$row[1]is column B, and so on.if ($row[0] === 'Name'): This is a simple way to skip the header row in your Excel file.

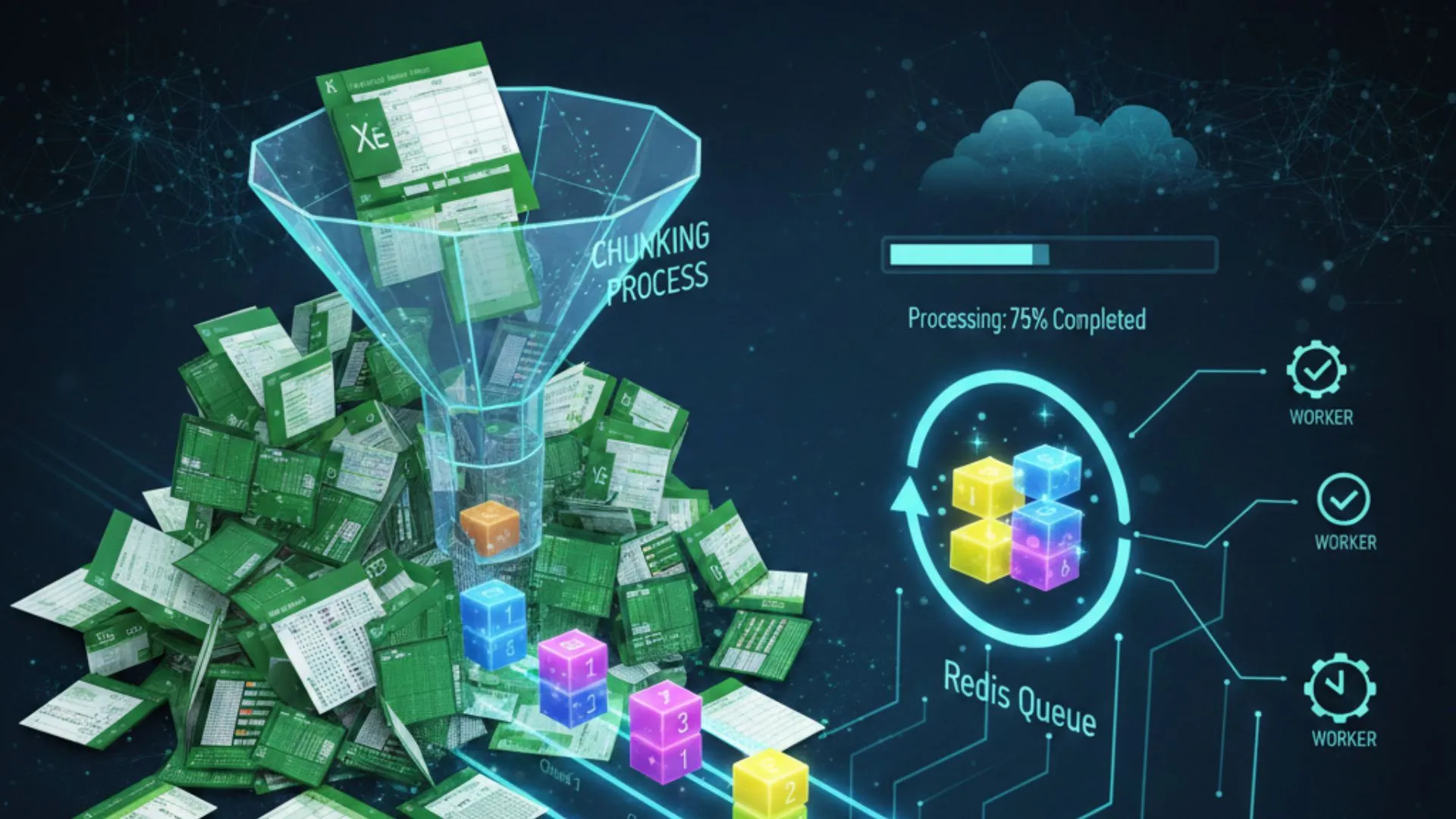

Step 4: Implementing Chunking (Solution to Memory Problems)

Now we get to the most important part. We will implement chunking so that memory doesn’t run out.

It’s very easy, we just need to add the WithChunkReading interface to our ProductsImport class.

<?php

namespace App\Imports;

use App\Models\Product;

use Illuminate\Support\Collection;

use Maatwebsite\Excel\Concerns\ToCollection;

use Maatwebsite\Excel\Concerns\WithChunkReading; // Add this

class ProductsImport implements ToCollection, WithChunkReading // Add this interface

{

// ... collection() method remains the same ...

/**

* Determine the chunk size (number of rows per process)

*

* @return int

*/

public function chunkSize(): int

{

return 500; // Process 500 rows each time

}

}What happens here?

By adding WithChunkReading and defining the chunkSize() method, we instruct Laravel Excel to:

- Open the Excel file.

- Only read the first 500 rows and load them into memory.

- Throw these 500 rows to the

collection()method. - After finishing, discard these 500 rows from memory.

- Continue reading the next 500 rows, and repeat the process until finished.

With this way, memory usage will remain low and stable, no matter how large your Excel file is!

Step 5: Improving Reliability with Batching

What happens if one row of data is corrupted among the 500 rows we process? The database insert process could fail halfway, and our data becomes inconsistent.

The solution is Batching. With batching, we can wrap the insert process for each chunk into a database transaction. If one row fails, then all 500 rows in that chunk will be canceled (rollback).

To do this, add the WithBatchInserts interface.

<?php

namespace App\Imports;

use App\Models\Product;

use Illuminate\Support\Collection;

use Maatwebsite\Excel\Concerns\ToCollection;

use Maatwebsite\Excel\Concerns\WithChunkReading;

use Maatwebsite\Excel\Concerns\WithBatchInserts; // Add this

class ProductsImport implements ToCollection, WithChunkReading, WithBatchInserts // Add this interface

{

// ... collection() and chunkSize() methods remain the same ...

/**

* Determine the batch size. Must be the same as chunkSize.

*

* @return int

*/

public function batchSize(): int

{

return 500;

}

}Now, every time Laravel Excel processes 500 rows, it will run all Product::create() queries inside a single database transaction. This maintains the integrity of your data.

Step 6: Integrating in Controller

Finally, let’s create a controller and route to handle file uploads.

1. Create Controller:

php artisan make:controller ProductController2. Add Method in ProductController.php:

<?php

namespace App\Http\Controllers;

use Illuminate\Http\Request;

use App\Imports\ProductsImport;

use Maatwebsite\Excel\Facades\Excel;

class ProductController extends Controller

{

public function showUploadForm()

{

return view('upload');

}

public function upload(Request $request)

{

// Validate file

$request->validate([

'excel_file' => 'required|mimes:xlsx,xls,csv|max:2048', // Max 2MB

]);

// Process import

Excel::import(new ProductsImport, $request->file('excel_file'));

return redirect()->back()->with('success', 'Product data imported successfully!');

}

}3. Create Route in routes/web.php:

use App\Http\Controllers\ProductController;

Route::get('/upload', [ProductController::class, 'showUploadForm'])->name('upload.form');

Route::post('/upload', [ProductController::class, 'upload'])->name('upload.process');4. Create View resources/views/upload.blade.php:

<!DOCTYPE html>

<html lang="en">

<head>

<meta charset="UTF-8">

<title>Upload Excel</title>

<!-- Add CSS if needed, e.g., Bootstrap -->

</head>

<body>

<h1>Upload Product Excel File</h1>

@if(session('success'))

<div style="color: green;">

{{ session('success') }}

</div>

@endif

<form action="{{ route('upload.process') }}" method="POST" enctype="multipart/form-data">

@csrf

<div>

<label for="excel_file">Select Excel File:</label>

<input type="file" name="excel_file" id="excel_file" required>

</div>

<br>

<button type="submit">Import</button>

</form>

</body>

</html>Now, try accessing /upload in the browser, upload your Excel file, and see the results. The process will be much faster and more stable!

Bonus: The Best Solution - Process in Background with Queue

For files with 1000-2000 rows, the chunking method above is already very sufficient. But what if the file is 50,000 rows? Or if the process for each row is very complex (e.g., calling external APIs)?

The user will still wait in the browser until the process finishes, which could cause timeouts.

The best solution is to move this heavy process to background queue.

1. Configure Queue Driver:

Open .env and change QUEUE_CONNECTION to database.

QUEUE_CONNECTION=databaseCreate a table for job queues:

php artisan queue:table

php artisan migrate2. Create Queueable Import Class (ShouldQueue)

Back to the ProductsImport.php file, add the ShouldQueue interface.

<?php

namespace App\Imports;

use App\Models\Product;

use Illuminate\Support\Collection;

use Maatwebsite\Excel\Concerns\ToCollection;

use Maatwebsite\Excel\Concerns\WithChunkReading;

use Maatwebsite\Excel\Concerns\WithBatchInserts;

use Illuminate\Contracts\Queue\ShouldQueue; // Add this

class ProductsImport implements ToCollection, WithChunkReading, WithBatchInserts, ShouldQueue // Add this interface

{

// ... all other methods remain the same ...

}That’s it! Now, when you call Excel::import(), Laravel will not process it directly. Instead, it will put a job into the jobs table.

3. Run Queue Worker

To have the job executed, you need to run the queue worker process in the terminal. Open a new terminal and run:

php artisan queue:workNow, try uploading the file again. You will get the response “Product data imported successfully!” immediately (even though the process hasn’t finished yet), and in the queue:work terminal, you will see logs that the import process is running in the background.

Conclusion

Processing large files is no longer a nightmare in Laravel. With the Maatwebsite Laravel Excel package and the right strategy, you can do it safely and efficiently.

- Problem:

memory limitandtimeout. - Solution 1 (Good): Use

WithChunkReadingto process data gradually and keep memory usage low. - Solution 2 (Better): Add

WithBatchInsertsto maintain data integrity with database transactions. - Solution 3 (Best): Implement

ShouldQueueto process files in the background, providing an amazing user experience and completely eliminating timeout issues.

Try it out, and hopefully no more memory exhausted errors haunt your code!