AI untuk Natural Language Processing: Bangun Aplikasi Teks yang Lebih Cerdas

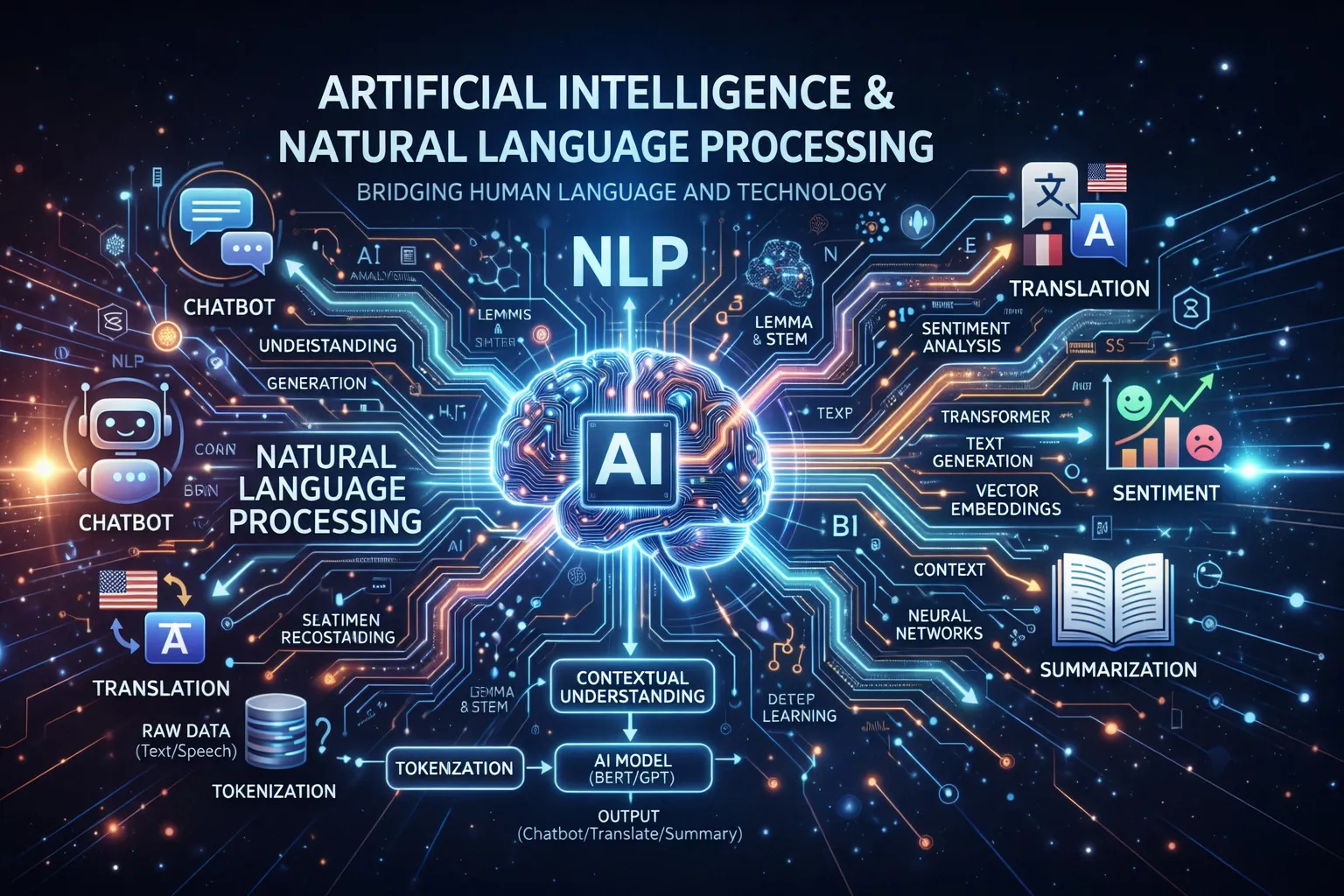

Natural Language Processing (NLP) adalah salah satu area paling menarik dalam dunia AI. NLP memungkinkan komputer untuk memahami, menginterpretasikan, dan menghasilkan bahasa manusia — menjadi fondasi dari chatbot, mesin pencari, rangkuman konten, hingga analisis sentimen.

Di artikel ini, kita akan eksplorasi cara menggunakan AI dan NLP untuk membangun aplikasi teks yang lebih cerdas menggunakan Python.

Daftar Isi

- Apa Itu NLP?

- Tugas-Tugas Utama NLP

- Memulai NLP dengan Python

- Sentiment Analysis

- Text Classification

- Named Entity Recognition

- Membangun Chatbot Sederhana

- Menggunakan Pre-trained Language Models

- Best Practices

- Kesimpulan

- Resources

Apa Itu NLP?

Natural Language Processing (NLP) adalah cabang AI yang mengurusi interaksi antara komputer dan bahasa manusia. NLP menggabungkan linguistik, ilmu komputer, dan machine learning untuk memproses dan menganalisis data bahasa alami dalam jumlah besar.

Mengapa NLP Penting?

- Otomasi: Mengotomasi customer support dengan chatbot yang cerdas

- Insight: Menggali wawasan berharga dari data teks yang tidak terstruktur

- Aksesibilitas: Menerjemahkan konten agar lebih bisa diakses oleh lebih banyak orang

- Efisiensi: Merangkum dokumen panjang hanya dalam hitungan detik

- Personalisasi: Memahami maksud pengguna untuk rekomendasi yang lebih baik

Tugas-Tugas Utama NLP

NLP mencakup berbagai macam tugas:

| Tugas | Deskripsi | Contoh |

|---|---|---|

| Sentiment Analysis | Menentukan nada emosional | Klasifikasi ulasan produk |

| Text Classification | Mengkategorikan teks | Deteksi spam |

| Named Entity Recognition | Mengidentifikasi entitas | Ekstrak nama, tanggal, lokasi |

| Machine Translation | Menerjemahkan antar bahasa | Inggris ke Indonesia |

| Text Summarization | Merangkum dokumen panjang | Ringkasan artikel berita |

| Question Answering | Menjawab pertanyaan dari konteks | Chatbot, mesin pencari |

Memulai NLP dengan Python

Python memiliki ekosistem yang sangat kaya untuk NLP. Library yang paling populer antara lain:

pip install nltk spacy transformers torch scikit-learn

python -m spacy download en_core_web_smPemrosesan Teks Dasar dengan NLTK

import nltk

from nltk.tokenize import word_tokenize, sent_tokenize

from nltk.corpus import stopwords

from nltk.stem import PorterStemmer

nltk.download('punkt')

nltk.download('stopwords')

text = "Natural Language Processing is transforming how computers understand human language. It enables smarter applications."

# Tokenization

words = word_tokenize(text)

sentences = sent_tokenize(text)

print("Words:", words[:10])

print("Sentences:", sentences)

# Hapus stopwords

stop_words = set(stopwords.words('english'))

filtered_words = [w for w in words if w.lower() not in stop_words]

print("Filtered:", filtered_words)

# Stemming

stemmer = PorterStemmer()

stemmed = [stemmer.stem(w) for w in filtered_words]

print("Stemmed:", stemmed)Pemrosesan Teks dengan spaCy

import spacy

nlp = spacy.load("en_core_web_sm")

doc = nlp("Apple is looking at buying U.K. startup for $1 billion")

# Part of speech tagging

for token in doc:

print(f"{token.text:15} {token.pos_:10} {token.dep_}")Sentiment Analysis

Sentiment analysis menentukan nada emosional dari sebuah teks — positif, negatif, atau netral. Ini sangat berguna untuk menganalisis ulasan produk, feedback pelanggan, atau komentar di media sosial.

Menggunakan VADER untuk Sentiment Analysis Sederhana

from nltk.sentiment.vader import SentimentIntensityAnalyzer

import nltk

nltk.download('vader_lexicon')

sia = SentimentIntensityAnalyzer()

reviews = [

"This product is absolutely amazing! Best purchase ever.",

"Terrible quality. Complete waste of money.",

"It's okay, nothing special but gets the job done."

]

for review in reviews:

scores = sia.polarity_scores(review)

if scores['compound'] >= 0.05:

sentiment = "Positif 😊"

elif scores['compound'] <= -0.05:

sentiment = "Negatif 😞"

else:

sentiment = "Netral 😐"

print(f"Ulasan: {review[:50]}...")

print(f"Sentimen: {sentiment} (skor: {scores['compound']:.2f})\n")Melatih Custom Sentiment Classifier

from sklearn.feature_extraction.text import TfidfVectorizer

from sklearn.linear_model import LogisticRegression

from sklearn.model_selection import train_test_split

from sklearn.metrics import classification_report

import pandas as pd

# Dataset contoh

data = {

'text': [

"Great product, highly recommend!",

"Awful experience, never buying again.",

"Pretty good, does what it says.",

"Broken on arrival. Very disappointed.",

"Exceeded my expectations!",

"Not worth the price at all.",

"Works perfectly for my needs.",

"Poor customer service.",

],

'label': [1, 0, 1, 0, 1, 0, 1, 0] # 1=positif, 0=negatif

}

df = pd.DataFrame(data)

# Bagi data

X_train, X_test, y_train, y_test = train_test_split(

df['text'], df['label'], test_size=0.2, random_state=42

)

# Ekstraksi fitur

vectorizer = TfidfVectorizer(ngram_range=(1, 2), max_features=5000)

X_train_vec = vectorizer.fit_transform(X_train)

X_test_vec = vectorizer.transform(X_test)

# Latih classifier

classifier = LogisticRegression()

classifier.fit(X_train_vec, y_train)

# Evaluasi

predictions = classifier.predict(X_test_vec)

print(classification_report(y_test, predictions))

# Prediksi teks baru

new_text = ["This is fantastic, I love it!"]

new_vec = vectorizer.transform(new_text)

prediction = classifier.predict(new_vec)

print(f"Prediksi: {'Positif' if prediction[0] == 1 else 'Negatif'}")Text Classification

Text classification mengassign kategori yang sudah ditentukan ke teks. Digunakan untuk deteksi spam, kategorisasi topik, routing tiket support, dan masih banyak lagi.

Multi-Class Text Classifier

from sklearn.pipeline import Pipeline

from sklearn.feature_extraction.text import TfidfVectorizer

from sklearn.multiclass import OneVsRestClassifier

from sklearn.svm import LinearSVC

# Data training dengan berbagai kategori

training_data = [

("Python is great for machine learning", "Teknologi"),

("Real Madrid won the Champions League", "Olahraga"),

("Stock market hits record high", "Keuangan"),

("New vaccine shows promising results", "Kesehatan"),

("Scientists discover new planet", "Sains"),

("JavaScript frameworks are evolving fast", "Teknologi"),

("World Cup final was thrilling", "Olahraga"),

("Inflation rate rises to 8%", "Keuangan"),

]

texts, labels = zip(*training_data)

# Buat pipeline

pipeline = Pipeline([

('tfidf', TfidfVectorizer(ngram_range=(1, 2))),

('classifier', LinearSVC())

])

# Latih

pipeline.fit(texts, labels)

# Uji

test_texts = [

"Machine learning is transforming industries",

"The football match ended in a draw",

"GDP growth slows down this quarter"

]

predictions = pipeline.predict(test_texts)

for text, category in zip(test_texts, predictions):

print(f"'{text}' -> {category}")Named Entity Recognition

NER mengidentifikasi dan mengklasifikasikan entitas bernama dalam teks seperti orang, organisasi, tanggal, dan lokasi. Teknik ini sangat berguna untuk mengekstrak informasi terstruktur dari teks bebas.

NER dengan spaCy

import spacy

nlp = spacy.load("en_core_web_sm")

text = """

Elon Musk founded SpaceX in 2002 in Hawthorne, California.

The company raised $100 million in its Series A funding round.

In December 2015, SpaceX successfully landed the first orbital rocket.

"""

doc = nlp(text)

print("Entitas yang Ditemukan:")

print("-" * 40)

for ent in doc.ents:

print(f"{ent.text:30} {ent.label_:15} {spacy.explain(ent.label_)}")Training NER Custom

import spacy

from spacy.training import Example

# Load model kosong

nlp = spacy.blank("en")

# Tambah komponen NER

ner = nlp.add_pipe("ner")

# Tambah label entitas custom

ner.add_label("PRODUK")

ner.add_label("PERUSAHAAN_TECH")

# Data training

TRAIN_DATA = [

("Apple released iPhone 15 yesterday.", {

"entities": [(7, 13, "PERUSAHAAN_TECH"), (23, 31, "PRODUK")]

}),

("Google announced Gemini AI model.", {

"entities": [(0, 6, "PERUSAHAAN_TECH"), (17, 26, "PRODUK")]

}),

]

# Latih model

optimizer = nlp.begin_training()

for epoch in range(20):

losses = {}

for text, annotations in TRAIN_DATA:

example = Example.from_dict(nlp.make_doc(text), annotations)

nlp.update([example], sgd=optimizer, losses=losses)

if epoch % 5 == 0:

print(f"Epoch {epoch}, Loss: {losses['ner']:.4f}")

# Test

test_doc = nlp("Microsoft launched Copilot AI.")

for ent in test_doc.ents:

print(f"{ent.text}: {ent.label_}")Membangun Chatbot Sederhana

Chatbot Berbasis Aturan dengan NLP

import re

from nltk.tokenize import word_tokenize

from nltk.stem import WordNetLemmatizer

lemmatizer = WordNetLemmatizer()

# Pola intent

intents = {

'salam': {

'patterns': ['hello', 'hi', 'hey', 'halo', 'selamat pagi', 'selamat siang'],

'responses': ['Halo! Ada yang bisa saya bantu?', 'Hi! Apa yang bisa saya bantu hari ini?']

},

'pamit': {

'patterns': ['bye', 'goodbye', 'sampai jumpa', 'dadah', 'selamat tinggal'],

'responses': ['Sampai jumpa! Semoga harimu menyenangkan!', 'Dadah! Jangan sungkan untuk kembali lagi.']

},

'bantuan': {

'patterns': ['bantu', 'help', 'support', 'masalah', 'problem', 'error'],

'responses': ['Saya siap membantu! Apa masalahnya?', 'Tentu, ceritakan masalah kamu ya!']

},

'terima_kasih': {

'patterns': ['terima kasih', 'thanks', 'makasih', 'thx'],

'responses': ['Sama-sama!', 'Dengan senang hati!', 'Kapanpun kamu butuh bantuan, saya di sini!']

}

}

def preprocess(text):

tokens = word_tokenize(text.lower())

return [lemmatizer.lemmatize(token) for token in tokens]

def get_response(user_input):

processed_input = preprocess(user_input)

for intent, data in intents.items():

for pattern in data['patterns']:

if any(p in ' '.join(processed_input) for p in [pattern]):

import random

return random.choice(data['responses'])

return "Maaf, saya kurang paham maksudmu. Bisa diulangi dengan kalimat yang berbeda?"

# Loop chat

print("Chatbot: Halo! Saya chatbot NLP sederhana. Ketik 'quit' untuk keluar.")

while True:

user_input = input("Kamu: ")

if user_input.lower() == 'quit':

break

response = get_response(user_input)

print(f"Chatbot: {response}")Menggunakan Pre-trained Language Models

Kekuatan sesungguhnya dari NLP modern ada pada pre-trained transformer models seperti BERT, GPT, dan berbagai variannya. Model-model ini sudah dilatih dengan miliaran data teks, sehingga kita bisa langsung menggunakannya atau melakukan fine-tuning untuk tugas spesifik.

Sentiment Analysis dengan Transformers

from transformers import pipeline

# Load model sentiment analysis yang sudah pre-trained

sentiment_pipeline = pipeline("sentiment-analysis")

texts = [

"The new AI features are incredibly impressive!",

"This implementation has too many bugs.",

"The performance is acceptable for most use cases."

]

results = sentiment_pipeline(texts)

for text, result in zip(texts, results):

print(f"Teks: {text}")

print(f"Sentimen: {result['label']} (keyakinan: {result['score']:.2%})\n")Text Summarization dengan Transformers

from transformers import pipeline

summarizer = pipeline("summarization", model="facebook/bart-large-cnn")

teks_panjang = """

Artificial intelligence (AI) is intelligence demonstrated by machines, as opposed to natural

intelligence displayed by animals including humans. AI research has been defined as the field

of study of intelligent agents, which refers to any system that perceives its environment and

takes actions that maximize its chance of achieving its goals. The term "artificial intelligence"

had previously been used to describe machines that mimic and display "human" cognitive skills

associated with the human mind, such as "learning" and "problem-solving". This definition has

since been rejected by major AI researchers who now describe AI in terms of rationality and

acting rationally, which does not limit how intelligence can be articulated.

"""

summary = summarizer(

teks_panjang,

max_length=100,

min_length=30,

do_sample=False

)

print("Ringkasan:", summary[0]['summary_text'])Question Answering

from transformers import pipeline

qa_pipeline = pipeline("question-answering")

context = """

Python was created by Guido van Rossum and first released in 1991. It is a high-level,

general-purpose programming language. Python's design philosophy emphasizes code readability

with the use of significant indentation. Python is dynamically typed and garbage-collected.

It supports multiple programming paradigms, including structured, object-oriented, and

functional programming.

"""

questions = [

"Who created Python?",

"When was Python first released?",

"What is Python's design philosophy?"

]

for question in questions:

result = qa_pipeline(question=question, context=context)

print(f"P: {question}")

print(f"J: {result['answer']} (keyakinan: {result['score']:.2%})\n")Best Practices

1. Kualitas Data

Data yang berkualitas sangat penting dalam NLP. Teks yang kotor atau tidak konsisten dapat merusak performa model secara signifikan.

import re

import unicodedata

def clean_text(text):

"""Bersihkan dan normalisasi teks untuk pemrosesan NLP"""

# Ubah ke huruf kecil

text = text.lower()

# Hapus tag HTML

text = re.sub(r'<[^>]+>', '', text)

# Normalisasi karakter unicode

text = unicodedata.normalize('NFKD', text)

# Hapus URL

text = re.sub(r'http\S+|www\S+', '', text)

# Hapus karakter spesial (pertahankan huruf, angka, spasi)

text = re.sub(r'[^a-z0-9\s]', '', text)

# Hapus spasi berlebih

text = re.sub(r'\s+', ' ', text).strip()

return text

# Test

sample = "Cek https://example.com untuk tools AI yang <b>keren</b>!!!"

cleaned = clean_text(sample)

print(f"Asli: {sample}")

print(f"Bersih: {cleaned}")2. Pemilihan Model

Pilih model yang tepat sesuai kebutuhan:

| Use Case | Pendekatan yang Direkomendasikan |

|---|---|

| Klasifikasi sederhana | TF-IDF + Logistic Regression |

| Sentiment analysis | Fine-tuned BERT atau VADER |

| Generasi teks | GPT-2 atau T5 |

| Tugas multibahasa | mBERT atau XLM-RoBERTa |

| Resource terbatas | DistilBERT |

3. Menangani Multibahasa

from transformers import pipeline

# Model multilingual untuk deteksi bahasa

classifier = pipeline(

"text-classification",

model="papluca/xlm-roberta-base-language-detection"

)

texts = [

"Hello, how are you?",

"Bonjour, comment allez-vous?",

"Halo, apa kabar?"

]

for text in texts:

result = classifier(text)[0]

print(f"'{text}' -> Bahasa: {result['label']} ({result['score']:.2%})")4. Metrik Evaluasi

Selalu evaluasi model NLP kamu dengan metrik yang tepat:

from sklearn.metrics import (

accuracy_score,

precision_recall_fscore_support,

confusion_matrix

)

import seaborn as sns

import matplotlib.pyplot as plt

def evaluate_classifier(y_true, y_pred, labels):

"""Evaluasi komprehensif classifier NLP"""

# Metrik dasar

accuracy = accuracy_score(y_true, y_pred)

precision, recall, f1, _ = precision_recall_fscore_support(

y_true, y_pred, average='weighted'

)

print(f"Akurasi: {accuracy:.4f}")

print(f"Presisi: {precision:.4f}")

print(f"Recall: {recall:.4f}")

print(f"F1 Score: {f1:.4f}")

# Confusion matrix

cm = confusion_matrix(y_true, y_pred)

plt.figure(figsize=(8, 6))

sns.heatmap(cm, annot=True, fmt='d', xticklabels=labels, yticklabels=labels)

plt.title('Confusion Matrix')

plt.ylabel('Label Sebenarnya')

plt.xlabel('Label Prediksi')

plt.tight_layout()

plt.savefig('confusion_matrix.png')Kesimpulan

Natural Language Processing membuka berbagai kemungkinan untuk membangun aplikasi yang lebih cerdas:

- ✅ Sentiment Analysis untuk memahami feedback pelanggan

- ✅ Text Classification untuk mengorganisir dan memfilter konten

- ✅ Named Entity Recognition untuk mengekstrak informasi terstruktur

- ✅ Chatbot untuk mengotomasi interaksi dengan pelanggan

- ✅ Pre-trained Models untuk hasil terbaik dengan effort minimal

Mulailah dengan pendekatan berbasis aturan yang sederhana, lalu secara bertahap integrasikan model ML yang lebih canggih sesuai kebutuhan kamu.

Resources

- NLTK Documentation

- spaCy Documentation

- Hugging Face Transformers

- Stanford NLP Group

- Papers With Code — NLP

Artikel Terkait:

- AI-Powered Testing dan QA: Otomasi Testing dengan Kecerdasan Buatan

- AI dalam DevOps: Intelligent CI/CD dan Infrastructure Management

- AI Chatbots: Membangun Aplikasi Conversational AI

- Generative AI untuk Pembuatan Konten: Tools dan Teknik

Sudah pernah membangun aplikasi NLP? Share pengalaman Kamu di komentar! 🤖